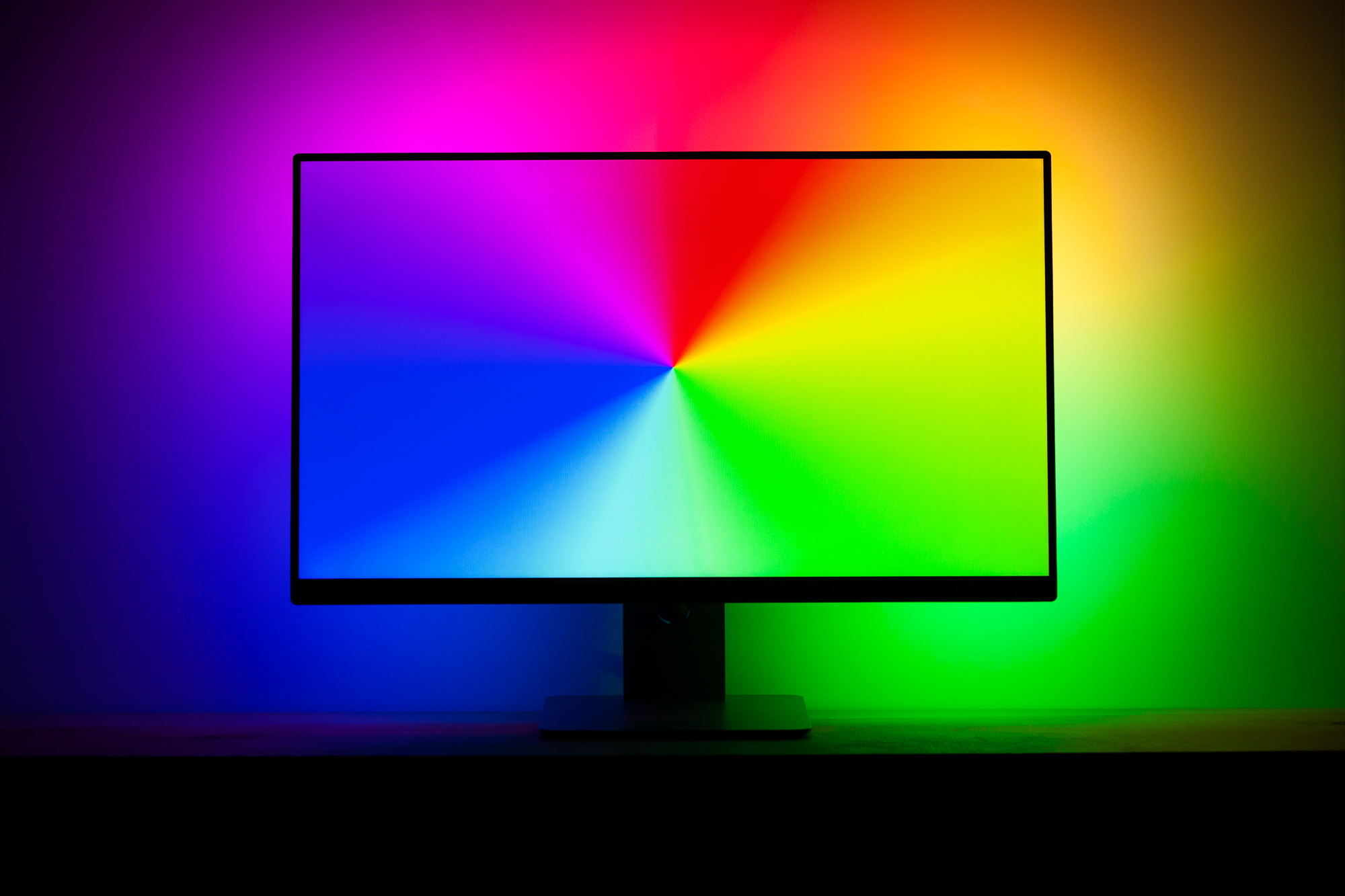

iRacing Plugin for Prismatik

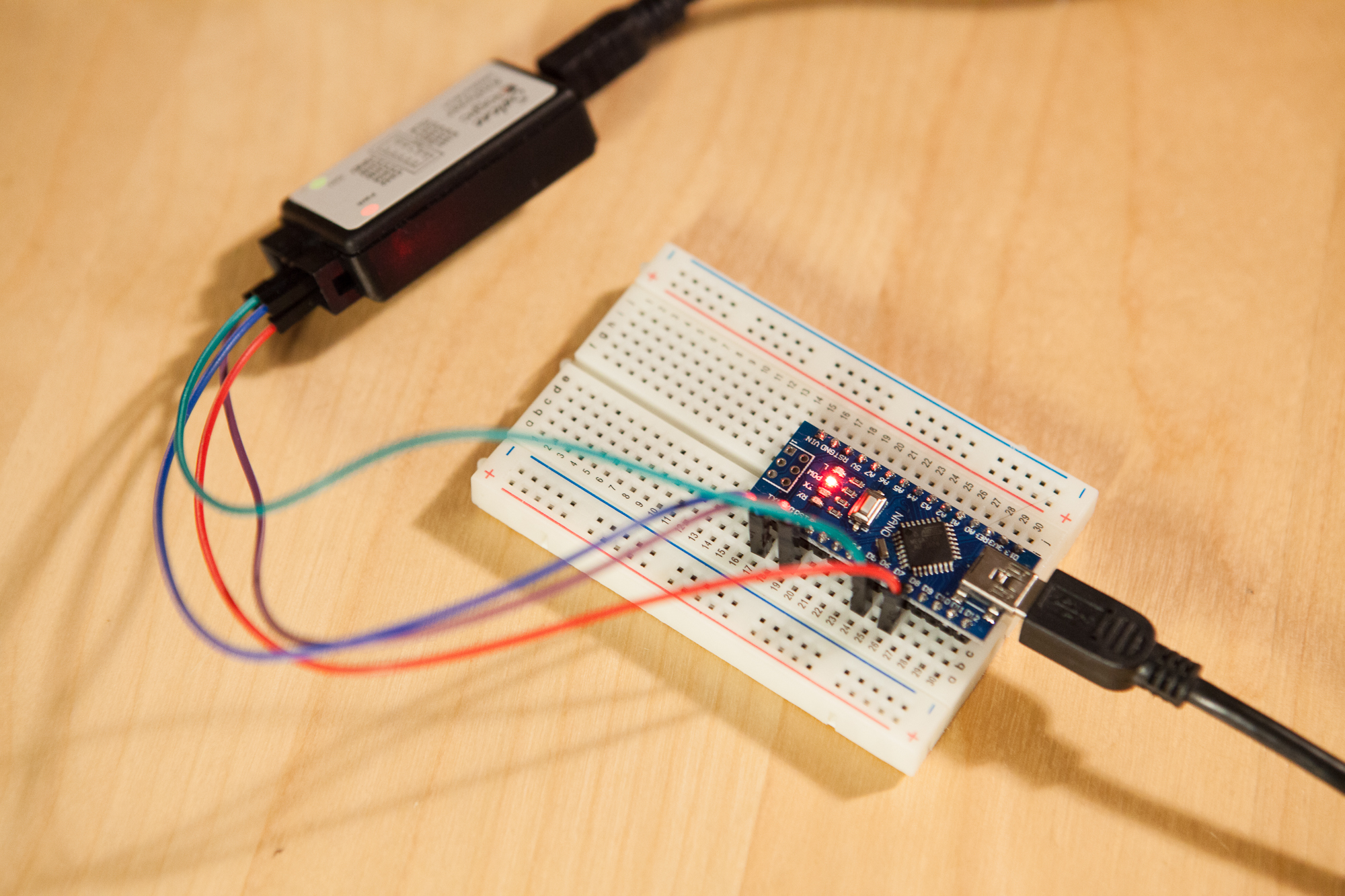

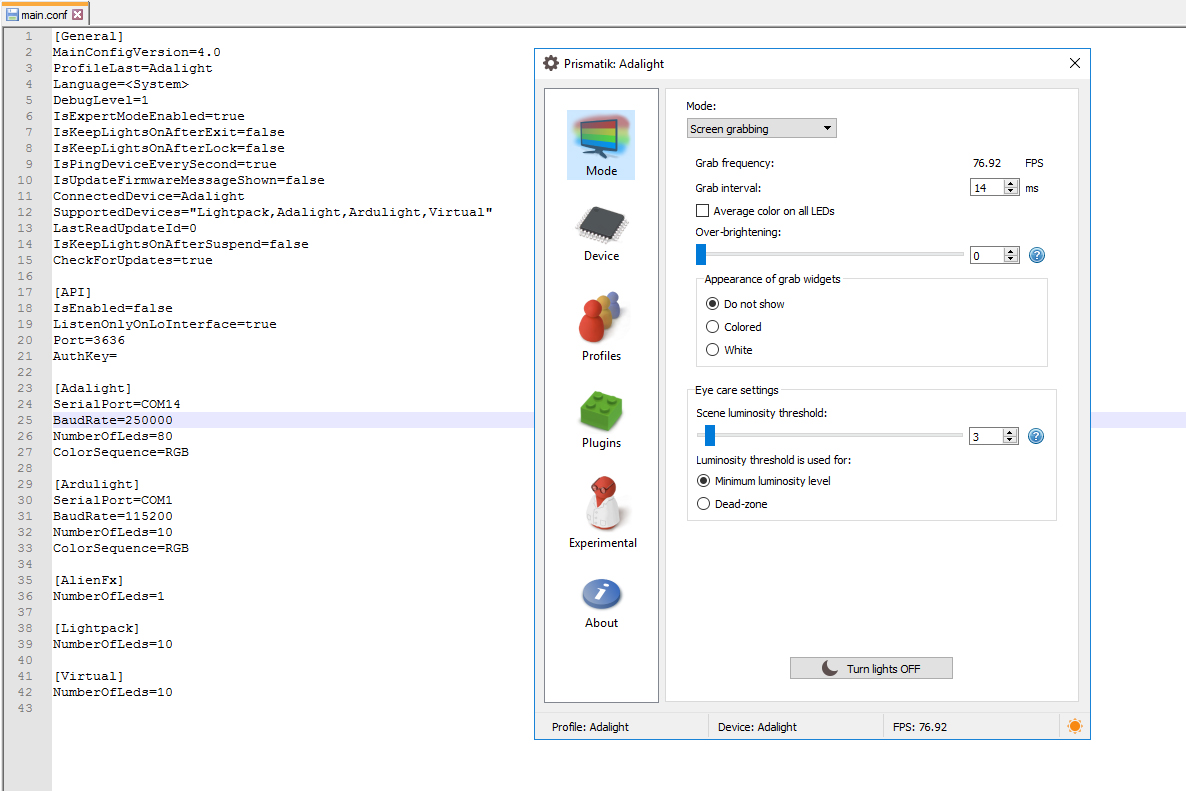

I’ve been trying to teach myself a little Python, and here’s what I came up with for my first small project. Using their respective APIs, I’ve built a plugin for the Prismatik ambilight software that maps live data from the iRacing simulator.