In last month’s article, I built my own DIY split-flap display. Although the display is amazing and can show just about anything, when I started the split-flap project I had just one goal in mind: being able to create a dedicated subscriber counter for my YouTube channel.

The Script

The heart of this project is a custom-made Python script. The script queries the YouTube Data API (v3), parses the responses, and sends the filtered result to the split-flap display connected via USB serial.

In addition to the API handling itself, the script also takes a few preparatory steps to deal with the limitations of the split-flap display. For every string sent to the display, the text is first passed through a number of filters to account for size and displayable characters. Long strings are split into multiple lines, large numbers are converted to scientific notation (“E+N”), and all non-displayable characters are replaced with ‘?’.

Since the script is statistics-oriented, I also added a dedicated “show stats” function that takes the statistic name (prefix) and value as arguments. It will then automatically adjust the output to show both on a single line or in two steps on separate lines, depending on the size of the display and the length of the combined text. If multiple prefixes are provided (e.g. “Subscribers, Subs, Sub”) it will automatically select the longest displayable one for the available space.

With comments and excluding dependencies, version 1.0.0 of the script is around 850 lines long. The script itself is open source (MIT license) and can be downloaded from GitHub, along with some basic instructions for setting it up.

Getting Started

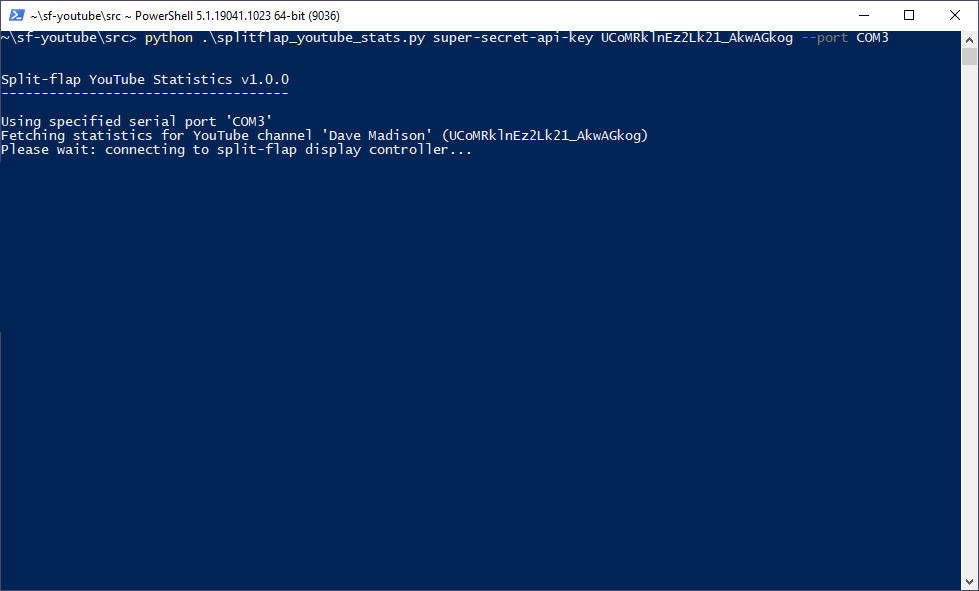

To start showing statistics the script requires three arguments:

- The Google API key for fetching data

- The YouTube Channel ID to get data about

- The serial port device name for the split-flap display (

--port)

These can either be provided when invoking the script from the command line, or passed as arguments when importing the splitflap_youtube_stats function in a script. When executed from the command line, the serial port can be omitted and the “first” serial port on the system will be used (as determined by pySerial).

Upon startup the script will create a YouTube API instance, open up the serial port to connect to the split-flap display, (optionally) display a series of startup messages, then jump into the main statistics fetch/display loop. Only if a fetch request to the API is successful will data be pushed to the flaps.

I designed the script’s statistic tracking features to be modular. Each tracker is based off of a common base class which handles periodic update checks and manages the flow of data from the API to the display. In version 1.0.0 there are three trackers: channel statistics, recent video statistics, and (of course) the subscriber counter.

Channel Statistics

The first statistic tracker displays information about the channel itself. In order it will show:

- Channel { channel title }

- Views { total views }

- Vids { video count }

By default channel statistics are fetched and shown every hour. By and large I don’t find these numbers that interesting, but if nothing else this lets the display move around to keep dust off. It also lets us know that display is still functioning even if the subscriber count hasn’t moved (wink wink, nudge nudge).

Recent Video Statistics

The second statistic tracker shows information about the latest video published on the channel. It fetches the latest video in the channel’s ‘uploads’ playlist, and if the video came out recently it will show some statistics:

- “New Vid!”

- { title } (first time video is detected)

- Views { total views }

- Likes { total likes }

- Comments { total comments }

The video’s title is only shown the first time it’s detected so as not to be redundant – particularly since longer titles can take awhile to display piece by piece.

By default a video is considered ‘recent’ if it came out in the past 3 days. The script checks for the existence of new videos every 5 minutes, and will show statistics (if a new video is available) every 30 minutes.

Subscriber Counter

The last (and most important!) statistics tracker is the subscriber counter. This fetches the latest number of channel subscribers from the API and shows the number on the display:

- Subs +{ change in subscribers }

- only if subscriber count increased

- Subs { subscribers }

If the number of subscribers has increased, this will also show the increase with a ‘+’ prefix before the total number of subscribers. The text prefix will automatically change between “Sub”, “Subs”, and “Subscribers” depending on the size of the display and the number of subscribers. It can also be turned off altogether to make a ‘pure’ sub counter with only numbers.

By default the subscriber count is fetched and updated every 2 minutes. By virtue of the frequent update rate, this is the default text which will show on the display when idle.

Hosting the Script

While testing I just ran the script from my desktop computer, but for the permanent installation it’s going to run on something a little more lightweight.

I pulled an old Raspberry Pi Zero W out of storage and reformatted it with Raspbian Buster 5.10 Lite. After connecting it to WiFi and enabling SSH, I SFTP’d the script over to the machine and installed the necessary PyPI dependencies. To streamline things I created a little helper Python script with all of the arguments necessary to connect to the YouTube API:

from splitflap_youtube_stats import splitflap_youtube_stats api_key = 'super-secret-api-key' # YouTube Data API v3 channel_id = 'UCoMRklnEz2Lk21_AkwAGkog' # Dave Madison port = '/dev/ttyUSB0' splitflap_youtube_stats(api_key, channel_id, port, show_intro=True)

And an even smaller bash script to invoke the Python interpreter as a daemon and redirect the program’s output (stdout and stderr) to a log file in the user directory:

#!/bin/bash ((python3 -u /home/pi/youtube_stats/run_stats.py) >>/home/pi/youtube_stats/log.txt 2>&1) & exit 0

Note the -u in the invocation of the Python 3 interpreter – this makes the output unbuffered, which means the log file is written in real-time (and not just when the buffer is full).

Last but not least I added a line to /etc/rc.local to invoke the bash script when the Pi starts up, so the YouTube statistics will begin to fetch and display as soon as the board has booted.

# Run Split-Flap YouTube Stats printf "Starting split-flap YouTube stats script...\n" bash /home/pi/youtube_stats/run_stats_daemon.sh

To top off the Raspberry Pi setup, I ran some code to make the filesystem “read-only”. This will mitigate corruption issues and allow me to plug / unplug the Pi without having to log in and cleanly shut everything down. That’s the hope at least – I’ll have to see how well it works in practice.

Because the filesystem is read only I had to move the log file to a tmpfs folder stored in RAM. It will get erased on reboot, but I can still log in to check if there’s an issue:

#!/bin/bash<br>touch /tmp/youtube_stats_log.txt ((python3 -u /home/pi/youtube_stats/run_stats.py) >>/tmp/youtube_stats_log.txt 2>&1) & exit 0

Fixing Time Problems

There’s one problem with making the filesystem read-only: the Raspberry Pi 3 does not have an onboard real-time clock (RTC) to keep track of the time. Instead it retrieves the time from an online server using the network time protocol (NTP) on boot and saves that time to disk. With a read-only filesystem the retrieved time isn’t stored – the computer is stuck in time when it was made read-only until it successfully boots and performs an NTP update.

This time skew causes issues with SSL/TLS. The security handshake between the server and client has some reliance on the system time, and the script’s requests to Google’s API server started being rejected because the time difference between the server and the Raspberry Pi was too large.

The solution to this problem is in two steps. First, I reconfigured the NTP service to disable the PrivateTmp feature as described here. Then I updated the rc.local file to include a forced update to the time on startup using ntpd:

# Update system time printf "Updating system time via NTP\n" service ntp stop ntpd -gq service ntp start

Quoting from StackExchange:

The “-q” option tells the NTP daemon to start up, set the time and immediately exit. The “-g” option allows it to correct for time differences larger then 1000 sec

https://askubuntu.com/questions/254826/how-to-force-a-clock-update-using-ntp/256004#comment439437_256004

After making these changes to the time updates I’ve had no further issues with the Raspberry Pi setup.

Display Complete!

The finished split-flap display now lives on a shelf in my garage. It’s been running the YouTube statistics script for a few days so far and seems happy as a clam. It’s not only a fun showpiece for the shop, but it also provides some good motivation to keep making more videos!

Future Improvements

While the current setup works great I’m not a huge fan of limiting the display to YouTube statistics. It would be nice to be able to change the output at will, for showing other useful info (events, alarms, emails, etc.) or writing arbitrary strings for fun.

The eventual goal is switch over to MQTT or some other sort of lightweight messaging protocol, and then have the messages stem from a local server. In an ideal world I would also ditch the Raspberry Pi / Arduino Nano combination in favor of a simpler WiFi enabled microcontroller such as an ESP8266 or ESP32. Unfortunately that would require another PCB design and some significant rewrites to the underlying firmware, which is more time than I want to invest at the moment.

I’ve been working on this split-flap display in one form or another for over 10 months now, and it’s time to focus my attention on some other projects. On to the next!

Please note that this article contains affiliate links. As an Amazon Affiliate I earn from qualifying purchases.